By: Nate Ernst, Autonomy Global Ambassador – Energy

Wildfire mitigation for utilities is not a capability gap. It is a stack configuration problem that sits squarely at the intersection of energy reliability, grid resilience and escalating wildfire risk. At DistribuTECH 2026 in San Diego, the Wildfire Risk Mitigation and Vegetation Management Symposium revealed that when utilities treat wildfire as a system-of-systems challenge, rather than a search for a silver-bullet tool, they can materially reduce ignition risk while keeping power flowing in high-threat environments. As part of the Symposium, I delivered a session on the evolving wildfire technology landscape and then moderated a panel discussion featuring technology providers from AiDASH, Cyberhawk, Exacter, Mosaic, and Sharper Shape, who offered a practitioner-level view of where utilities are making progress and where they are still stuck. This article provides the highlights of those presentations.

The Energy–Wildfire Problem Is Now a Core Grid Problem

Grid-caused ignitions have become a central part of the wildfire problem. The stakes are not abstract. Across the U.S., from 2020 to 2025, utility-related fire starts have been linked to approximately 4.1 million acres burned, nearly one in ten U.S. acres burned over that period. At the same time, total annual burned area has become highly variable year to year. This makes planning more difficult and raises the stakes for proactive mitigation, instead of reactive response.

On top of this, regulators, public perception, constrained budgets and an overwhelming number of technology options put intense pressure on utilities to show measurable progress on wildfire mitigation. For electric utilities, this creates a dual mandate to keep the lights on under growing load and extreme weather, while driving down ignition probability and regulatory exposure.

Wildfire Mitigation Is a Stack Problem, Not a Single-Tool Fix

Wildfire technology decisions are hard precisely because utilities sit at the nexus of public scrutiny, constrained budgets and an overwhelming number of vendor options. No single technology solves wildfire risk for every utility, in every terrain, under every regulatory regime. Instead, effective mitigation depends on building a stack that reflects local terrain, vegetation, asset density, fire behavior, regulatory expectations and internal operational constraints. The challenge involves configuring and integrating them into something that actually changes what crews do in the field…before the next red-flag event.

And yet, at the same time, every utility must solve a different puzzle. Terrain, vegetation types, wind regimes, fuel moisture patterns and historic fire behavior vary dramatically between service territories. This means stack configuration must be local, even when tools are global.

Regulatory pressure also differs by jurisdiction. Some utilities operate under aggressive wildfire mitigation plan requirements. Others work in earlier stages of formal oversight. Cost is similarly contextual, What looks expensive in one region might be justified in another where communities sit directly in the wildland–urban interface where fire return intervals shorten.

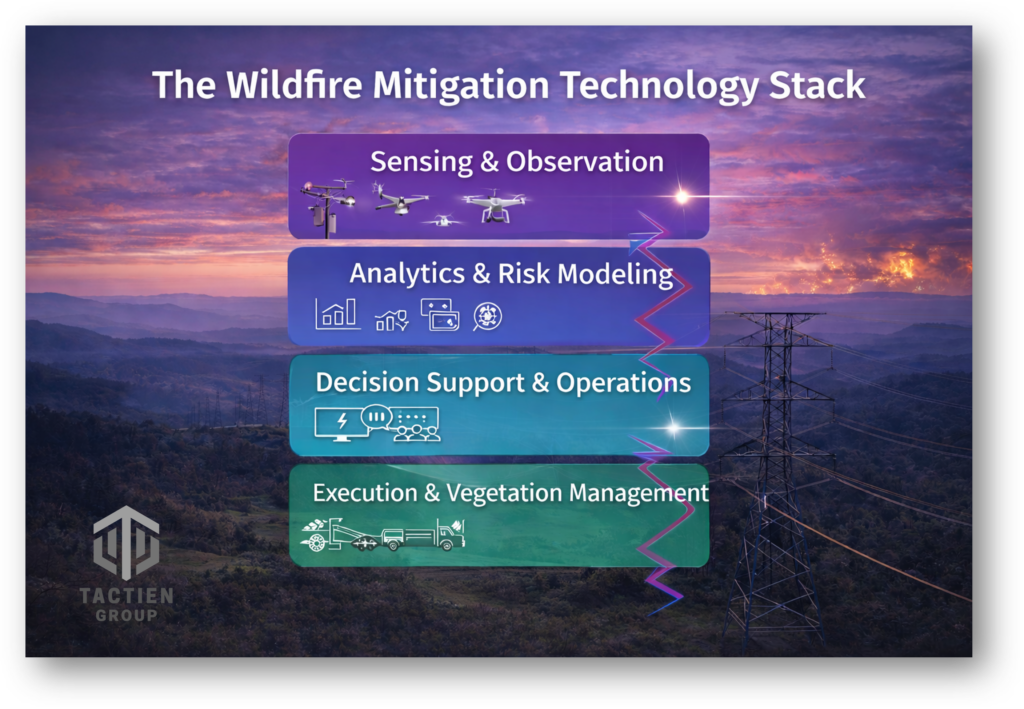

Despite this variability, a practical way to think about wildfire mitigation technology involves a four-layer stack framework: sensing, analytics, decision support and execution. Each layer has distinct strengths and tradeoffs. The value compounds when they are aligned to a common operating picture instead of operating as isolated projects.

Sensing: Seeing the Grid and the Fire Environment

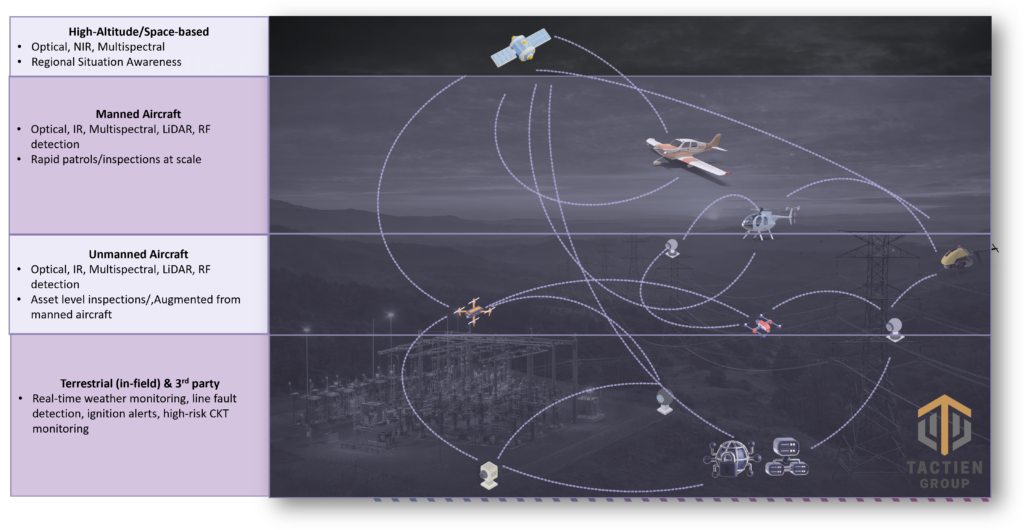

In the sensing and observation layer, utilities now draw from a spectrum of airborne and terrestrial sources: satellites, high-altitude or manned aircraft, unmanned aircraft systems (UAS) , fixed sensors along the right-of-way, and third party data. Satellites and wide-area platforms help determine where to look and offer broad coverage and early indications of changing conditions, Crewed and uncrewed aircraft deliver corridor- and asset-level truth that can be translated into targeted work.

Matt Zafuto of Cyberhawk highlighted how close visual inspection at scale, delivered at a reasonable price point with high-fidelity data, has become a game changer for understanding grid condition in the context of wildfire risk. At the same time, sensing programs must contend with revisiting latency, weather constraints, operating cost, operational complexity and the very real risk of alert fatigue if data streams are not tightly managed.

Analytics: Turning Data into Wildfire Intelligence

Analytics and risk modeling sit between raw data and meaningful wildfire intelligence. Platforms that combine vegetation condition, asset health, ignition probability and fire spread behavior can help utilities prioritize work where probability times consequence is highest, rather than treating all miles or structures as equal.

Chris Beaufait from Sharper Shape and Mrigank Shekhar of AiDASH emphasized that analytics only create value when they are grounded in authoritative data and tuned to the realities of specific networks, not generic models. AI and machine learning are accelerating this layer, but AI must be embedded within clear governance and human accountability to be safe for utility operations.

Decision Support: From Models to Operational Plans

Decision support is where wildfire intelligence either enters the real operational world or stalls in dashboards. Utilities need decision tools that connect risk scores to specific circuits, spans and structures, link directly into work management systems and give operators a defensible way to choose what gets done now versus later.

Mosaic’s John Morris underscored how often this breaks down today. Wildfire risk models, asset registries, work management tools and compliance systems frequently live in separate environments, with no single source of truth that spans the wildfire value chain. As regulatory requirements evolve, those disconnected systems drift further apart, making it harder, not easier, to explain why certain decisions were made when conditions change.

Execution: Converting Decisions into Defensible Field Action

Execution is where the entire stack is judged, by both regulators and communities. Geoff Bibo from Exacter and others pointed out that many ignition-related failure modes are detectable if utilities look for the right signatures with the right combination of technologies. But detection alone is not enough. Everyone across the utility must understand their role, from planning and scheduling to field crews and incident response. Work must be tracked in ways that align with both safety and regulatory expectations. Analytics only matter when they change field action. The goal is not more data but better decisions, faster and defensibly tied to executed work.

Lessons Learned: Integration Reality and What’s Next

The challenge is not whether the industry has the tools. It is whether those tools are aligned, integrated, and operationalized fast enough to matter when conditions change.

From the inspection side, Cyberhawk’s Zafuto highlighted how dramatically the sensing landscape has evolved in just a few years. High fidelity visual data, once difficult and expensive to collect at scale, is now accessible enough to change how utilities allocate resources. “The close visual inspection and the ability to get close visual inspection at scale, at a reasonable price point, with high fidelity data has been a game changer,” Zafuto said. The real value, he noted, is not the sensor itself, but the ability to use that visibility to decide where crews are needed and where work can be deferred. He continued, “Taking those data points together and creating something that enables quick decision support is going to be critical.”

Yet having better data does not automatically translate into better outcomes. As the discussion shifted from sensing to analytics and risk modeling, Sharper Shape;s Beaufait emphasized that wildfire intelligence only creates value when it reaches the people responsible for acting on it. “If you can’t take that information and put it in the hands of operators, tied directly to their standard work and decision authority, they cannot make vegetation decisions or prioritize risk. Data that lives outside of operational workflows does not reduce risk,” he warned. Unused or siloed data is not neutral. “If you are collecting data from multiple vendors and it is sitting in disconnected systems or on individual machines, you have a problem. After an incident, that fragmentation is one of the first things regulators and lawyers will examine,” Beaufait added.

That concern surfaced repeatedly as panelists described the operational reality inside many utilities today. Morris (Mosaic) pointed to fragmentation as one of the largest barriers to execution. “There is no single source of truth. There are disparate systems across the enterprise,” Morris noted. Wildfire risk data, asset data, work management systems, and compliance tools often operate independently. As regulatory requirements evolve, those systems fall further out of alignment. When asked what a true single source of truth should look like, Morris candidly stated, “I don’t think it exists today, what we need. I think it’s something that the consortium of utilities need to define.”

Shekhar from AiDASH echoed that diagnosis. He described how risk insights lose context as they move across systems and teams. “Your wildfire is in one system, work orders in the third system, the asset data lies in another system and they don’t talk to each other,” Shekhar lamented. The result is delay, confusion and missed opportunity. For Shekhar, “Integration has to be built in as designed. It should not be an afterthought.”

Exacter’s Bibo brought the conversation back to failure modes and human impact. Execution challenges become even more apparent as inspection programs scale. “All of these things that cause fires are detectable if you look for the signs using the right technologies,” he emphasized. Detection often requires blended approaches rather than reliance on a single method, but detection alone is not enough. “Everybody at the utility needs to understand what their role is,” he added. Bibo also grounded the conversation in the human impact of wildfire prevention. He described visits to fire sites as a “significant emotional experience.” For utilities and technology providers, the consequences extend far beyond metrics and dashboards. They affect communities, livelihoods, and lives.

Looking forward, panelists agreed that the pace of change, especially around AI, risk modeling and vegetation intelligence, continues to accelerate.Emerging “human-in-the-loop” and agentic AI models are helping surface scenarios, recommend actions and highlight emerging risks. Even so, panelists insisted that final decisions must remain with accountable utility operators.

“AI is only as good as the data and governance behind it. Generic results are not safe for utility networks. AI must be grounded in authoritative data, integrated into decision workflows, and operated with clear human accountability,” Beaufait cautioned.

The End State: Aligned Stacks, Better Decisions

Wildfire mitigation success is not determined by any single tool. It is determined by whether utilities build a stack that fits their environment, integrate it into a common platform and operationalize the full flow from sensing to modeling to decisions to executed work. In practical terms, key takeaways include these realisms:

- Analytics only matter when they change field action.

- Risk must be approached as probability times consequence.

- Data is only valuable if it is usable.

- Integration prevents isolated capabilities.

- “Good enough” depends on the decision and time horizon.

- The right stack depends on the utility’s specific environment. Terrain and fire behavior vary widely, regulatory pressure differs by jurisdiction, and cost is contextual rather than absolute.

- Alignment matters more than sophistication.

- The goal is not more data. The goal is better decisions, faster, and defensibly tied to action in the field.

As both wildfire risk and expectations of grid resilience are rising, utilities that treat wildfire mitigation as a configurable technology stack, rather than a single procurement decision, will be best positioned to protect communities while keeping energy systems reliable. Zafuto closed with the sobering thought that the next inspection you fly might be the one that prevents the next wildfire. That sense of immediacy drives utilities to rethink not just their tools…but their entire value chain from detection to execution.